I’ll never forget the day I was first introduced to AI in an academic environment. I was sitting in my 11th-grade chemistry class when my friend asked me, “Have you heard of ChatGPT?”

That was three years ago. Now, you can’t escape generative AI, no matter which way you turn. There are ads for AI “friends” in the New York City Subway, AI “singers” and “actors” are turning up in entertainment, and the Gemini AI overview is the first thing you see when you do a quick Google search. It feels impossible to escape this new technology.

Generative AI has fundamentally changed the learning experience for college students, presenting them with conflicting emotions about their education and a lack of clear guidance from their institutions about how they can and cannot use AI in their coursework.

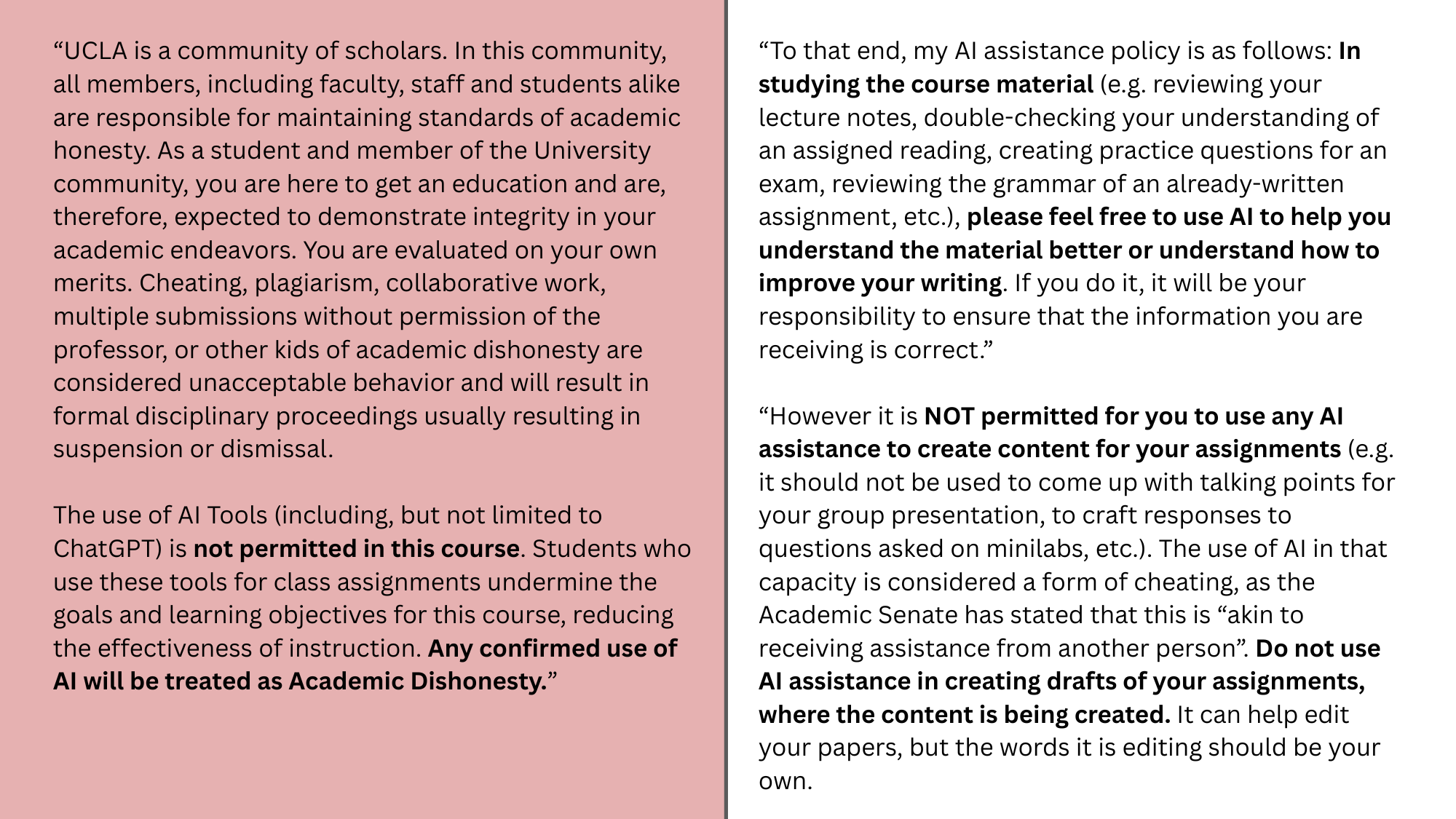

As a second-year student at UCLA, I have seen this firsthand. Every class I take has an AI policy in the syllabus that derives from the school-wide policy stated on UCLA’s website, which states:

“Unless otherwise specified by the faculty member, all submissions … must either be the Student’s own work, or must clearly acknowledge the source.”

It also states that students should consult with their instructors about the acceptable use of GenAI for each course — and those uses vary significantly from course to course, even within the same academic department.

On the first day of classes, each professor of mine shared their respective AI policies. I am a psychology major, and two of my course syllabi highlight these varying policies.

“I’m okay with you using AI as a tool to help you think about things,” she said. “But I am not okay with you using AI to think for you or write for you.” — anonymous UCLA communications professor

One of my courses, for example, flatout bans the use of AI, equating it with cheating or plagiarism. In the syllabus for another course, focused on sensation and perception, my professor outlined how there is a strong association between the utilization of AI for assignments and inadequate learning and declining memory retrieval. This professor allows the use of AI to help with further understanding of class concepts, but not to create the work for you.

The realities of navigating these varying policies are challenging, something I have found personally and something I heard in speaking to other students at other institutions for this story.

AI in the Classroom

When generative AI is introduced into the classroom, several interesting points arise. Of course, the first concern is academic integrity. Generative AI brings out a new form of cheating; any student can simply copy and paste their class assignments into a chatbot like ChatGPT or Google Gemini, and within seconds, the assignment will be completed for them. With this easy access to cheating, it leaves professors fearful that none of their students’ work is honest.

My communications professor, who asked to be anonymous for this story, said that they believe AI can be a powerful tool in education, but only to a certain extent. We talked about how AI can be used for good.

“I’ve tried to move away from [AI] a lot, and do more physical things, like writing in my notebook, instead of typing on my computer.” — Occidental College student

“It’s a tool that allows things to be more interactive, engaging, and allows for different types of learners to be engaged. And that, you know, I think is a great tool, and I applaud that,” they said.

Personally, I have used AI as a study tool. I regularly use Quizlet, an online study tool that generates digital flashcards and interactive learning techniques, integrates AI to create AI-generated practice tests, study guides, and more. Another study tool that uses AI is Gizmo. Similar to Quizlet, Gizmo uses AI to make studying more efficient. When I use Gizmo, all I have to do is upload my class lecture notes, and the technology transforms them into active recall quizzes. If I need further guidance, an “AI Tutor” will explain each concept to me step-by-step. These are just two examples of the countless other online study resources that employ AI to enhance and customize studying.

I talked to two students about their experiences with AI at the academic level: Clara McKoy, a second-year student at UCLA, and Ethan Carter, second-year student at Occidental College. They both agreed that Generative AI’s immersion in college life has its pros and cons. On one hand, McKoy and Carter believe AI is useful as a study tool and generating practice exams and explanations for unclear concepts. On the other hand, the boundary between acceptable and unacceptable AI is blurry.

McKoy explained how she feels guilty when she uses AI, knowing that AI reduces human connection and may diminish curiosity and cognitive development; she is also concerned about AI’s environmental impacts and reliability issues.

Both students expressed conflicting feelings about how they utilize AI. When I asked each if they believe GenAI has a positive or negative net impact on higher education as a whole , they both said it was a negative effect. But when I asked them about GenAI being advantageous or disadvantageous to them as a student on a personal level, they both agreed it has helped them in the long run. Carter said it is very easy to fall into the trap of using AI to do his assignments for him, and he’s had to take his own measures to prevent that.

“I’ve tried to move away from it a lot, and do more physical things, like writing in my notebook, instead of typing on my computer,” he said.

My communications professor encourages the usage of these kinds of AI tools by students in the academic environment. However, she is critical of using AI to complete assignments, arguing that it undermines the purpose of a college education: to develop critical thinking skills and learn how to make one’s own decisions.

“I’m okay with you using AI as a tool to help you think about things,” she said. “But I am not okay with you using AI to think for you or write for you.”

Mixed Messages about AI

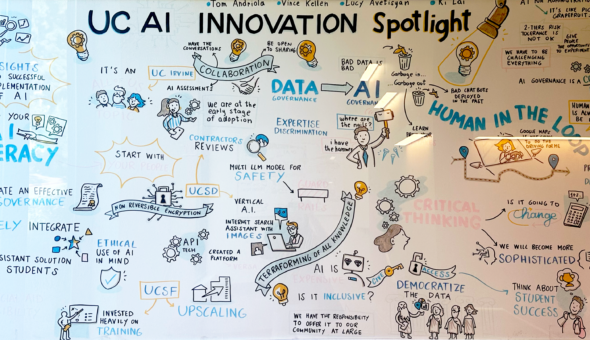

As a student navigating college in an AI-enabled world, it is difficult to face inconsistent AI policies from class to class and a schoolwide policy that, in my opinion, is at best a little haphazard, and, at worst, contradictory: Even as students are restricted in using AI in the classroom, the school is developing several AI projects and proudly publicizing them.

On the one hand, the university frowns on the use of AI to complete assignments. On the other, the administration regularly touts potential benefits for education. This is hardly a surprise given that UCLA promotes itself as the “birthplace of the internet.”

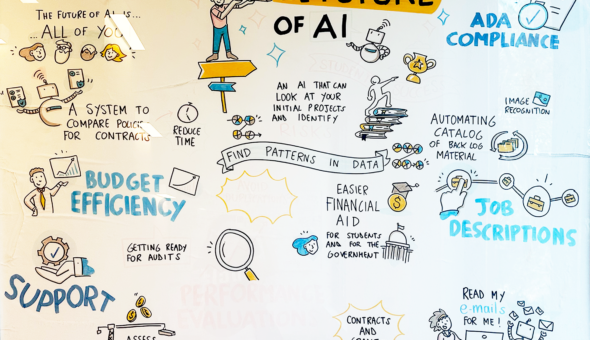

For example, UCLA has created the AI Innovation Initiative, a “campus-wide effort to build AI fluency, foster innovation, and drive responsible AI adoption.” This initiative offers many AI tools and events for students, faculty, and staff to utilize. UCLA offers resources with Google Gemini, Microsoft Copilot, OpenAI ChatGPT, and Google Notebook LM. In addition, UCLA has hosted many events such as “AI in Action: Flash Talks on AI Use in the Classroom”, “A Conversation with AI: Hands-on Demonstration of ChatGPT & Other AI Tools”, and “ChatGPT for Programming: Exploring AI as a Potential Equity-Lever for Learning to Code?” Along with these initiatives, UCLA has introduced many pilot projects for AI experimentation. One of these projects is BruinBot, an LLM-backed course planner. This project is designed to help students navigate course-planning and academic progression at large public schools like UCLA. One could argue that this is a helpful initiative for navigating the degree-mapping process; however, it may reduce the human connection students have with their advisors and, like all technology, it is susceptible to errors or glitches that could potentially harm students.

UCLA’ media relations department] did not respond to multiple requests to be interviewed for this story.

UCLA’s “True Bruin Values” highlight the five fundamental pillars of Integrity, Excellence, Accountability, Respect, and Service. When generative AI comes into play, it’s hard to draw the line between what follows and what bends these moral principles. Like most prestigious universities, especially an R1 university like UCLA, critical thinking and innovation are not only encouraged, but expected of students.

Generative AI in higher education affects students’ cognition, something that is critical in academia. Generative AI has the potential to deteriorate memory retention, problem-solving, critical thinking, and creativity, and it makes cognitive offloading (using external tools such as AI or the internet to achieve cognitive tasks) easy.

In my experience, by getting AI to do your work for you, you are doing yourself the biggest disservice: losing your cognitive abilities and cheating your way through school–something we pay thousands and thousands of dollars for.